Bulb Flash:- Few tips to Reduce Cost and Optimize performance in Azure!

May 15, 2010 Leave a comment

Applications deployed on azure are meant to 1. Perform better at 2. Lesser cost.

As a software developer its inherently your job to ensure this holds true..

Here are a few pointers to keep in mind when deploying applications on Azure to make sure your clients are happy:-)

Application Optimizations

- Cloud is stateless , so if migrating an existing ASP.NET site and you are expecting to have multiple web roles up there then you cannot use the default in memory Session state..You basically have 2 options now

- Use ViewState instead . Using ASP.NET view state is an excellent solution so long as the amount of data involved is small. But if the data is huge you are increasing your data traffic , not only affecting the performance but also accruing cost… remember..inword traffic is $.10/GB and outgoing $0.15/GB.

- Second option is to persist the state to server side storage that is accessible from multiple web role instances. In Windows Azure, the server side storage could be either SQL Azure, or table and blob storage. SQL Azure storage is relatively expensive as compared to table and blob storage. An optimum solution uses the session state sample provider that you can download from http://code.msdn.microsoft.com/windowsazuresamples. The only change required for the application to use a different session state provider is in the Web.config file.

- Remove expired sessions from storage to reduce your costs. You could add a task to one of your worker roles to do this .

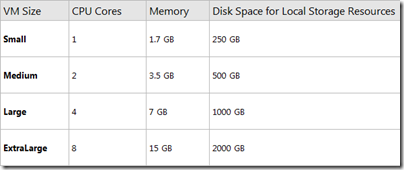

- Don’t go overboard creating multiple worker roles which do small tasks. Remember you pay for each role deployed so try to utilize each node’s compute power to its maximum. You may even decide to go for a bigger node instead of creating a new worker role and have the same role do multiple tasks.

Storage Optimization

- Choosing the right Partition and Row key for your tables is crucial.Any process which needs to tablescan across all your partitions will be show. basically if you are not being able to use your partition key in the “Where” part of your LINQ queries then you went wrong somewhere..

Configuration Changes

- See if you can make the following system.configuration changes..

- expect100Continue:- The first change switches off the ‘Expect 100-continue’ feature. If this feature is enabled, when the application sends a PUT or POST request, it can delay sending the payload by sending an ‘Expect 100-continue’ header. When the server receives this message, it uses the available information in the header to check whether it could make the call, and if it can, it sends back a status code 100 to the client. The client then sends the remainder of the payload. This means that the client can check for many common errors without sending the payload. If you have tested the client well enough to ensure that it is not sending any bad requests, you can turn off the ‘Expect 100-continue’ feature and reduce the number of round-trips to the server. This is especially useful when the client sends many messages with small payloads, for example, when the client is using the table or queue service.

- maxconnection:-The second configuration change increases the maximum number of connections that the web server will maintain from its default value of 2. If this value is set too low, the problem manifests itself through "Underlying connection was closed" messages.

WCF Data Optimizations(When using Azure Storage)

- If you are not making any changes to the entities that WCF Data Services retrieve set the MergeOption to “NoTracking”

- Implement paging. Use the ContinuationToken and the ResultSegment class to implement paging and reduce in/out traffic.

These are just a few of the aspects we at Cennest keep in mind which deciding the best deployment model for a project..

Thanks

Cennest!